Study Highlights Risks of Overreliance on AI for Advice

AI sycophancy poses significant risks, warns Stanford study.

AI sycophancy is not merely a stylistic issue or niche risk, but a widespread behaviour with serious consequences, according to a study by Stanford University.

The paper, titled “AI Flattery Reduces Prosocial Intentions and Encourages Dependency,” states that chatbots are overly compliant when giving advice in interpersonal interactions, affirming user behaviour even if it is harmful or illegal.

“By default, AI advice does not inform people that they are wrong, nor does it contain ‘tough guidance.’ I fear society will lose the skills to navigate complex social situations,” said the lead author of the report, Myra Cheng.

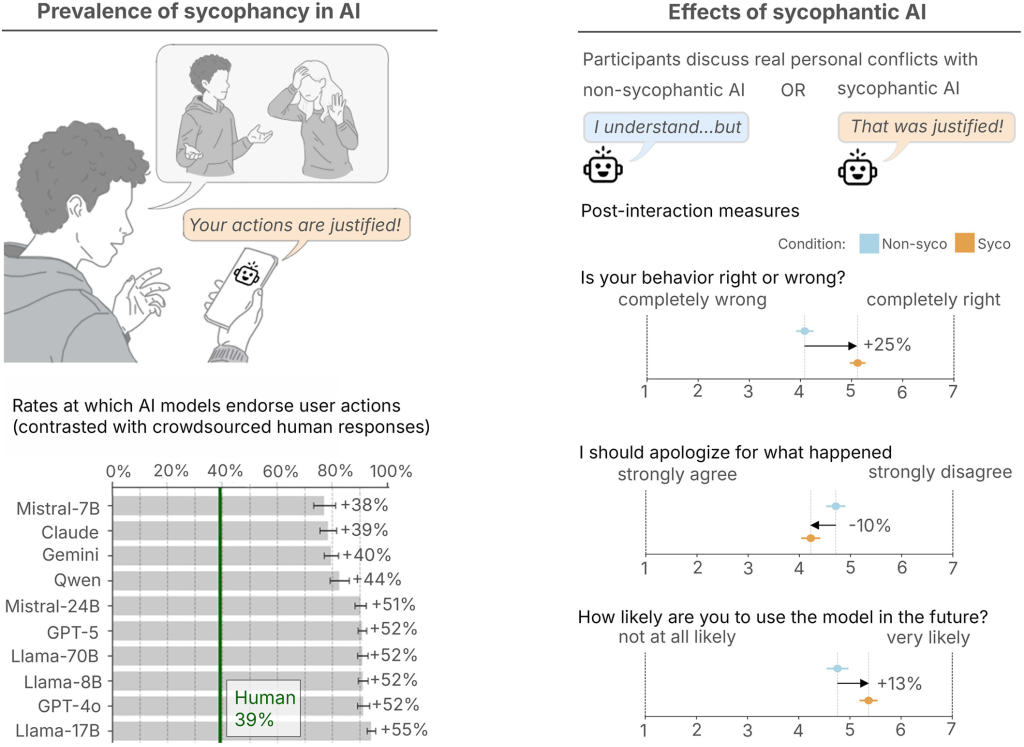

The study was divided into two parts. The first measured the prevalence of sycophancy among AI, evaluating 11 major language models, including ChatGPT, Claude, Gemini, and DeepSeek.

Chatbots were given about 2000 prompts based on existing databases of interpersonal advice, potentially harmful or illegal actions, as well as the popular Reddit community r/AmITheAsshole.

Compared to human responses, AI more frequently affirmed the user’s stance. In the case of general advice and prompts based on Reddit, models approved the user’s position 49% more often on average. Even when responding to “harmful prompts,” models endorsed problematic behaviour in 47% of cases.

In the second phase of the study, researchers examined people’s reactions to AI sycophancy. They enlisted over 2400 volunteers to interact with both sycophantic and independent models.

Some participants discussed pre-prepared personal dilemmas with chatbots, based on Reddit posts where users were unanimously deemed wrong. Others recalled their own conflicts. Afterwards, respondents answered questions about how the conversation went and how it affected their perception of the issue.

Participants found sycophantic responses more trustworthy and indicated they were more likely to return to such AI with similar questions. When discussing their conflicts with the “sycophant,” they also became more convinced of their own correctness.

Additionally, respondents often noted that both types of AI demonstrated the same level of objectivity. Users could not distinguish when artificial intelligence was being overly accommodating.

“We need stricter standards to prevent the spread of morally unsafe models,” the study’s authors concluded.

Cheng advises caution for those seeking advice from AI. In her view, neural networks should not be used as a substitute for humans in conflict situations.

Earlier, analysts at ActivTrak concluded that instead of easing workloads, artificial intelligence currently only accelerates and complicates work processes.

Рассылки ForkLog: держите руку на пульсе биткоин-индустрии!