When intelligence isn’t enough

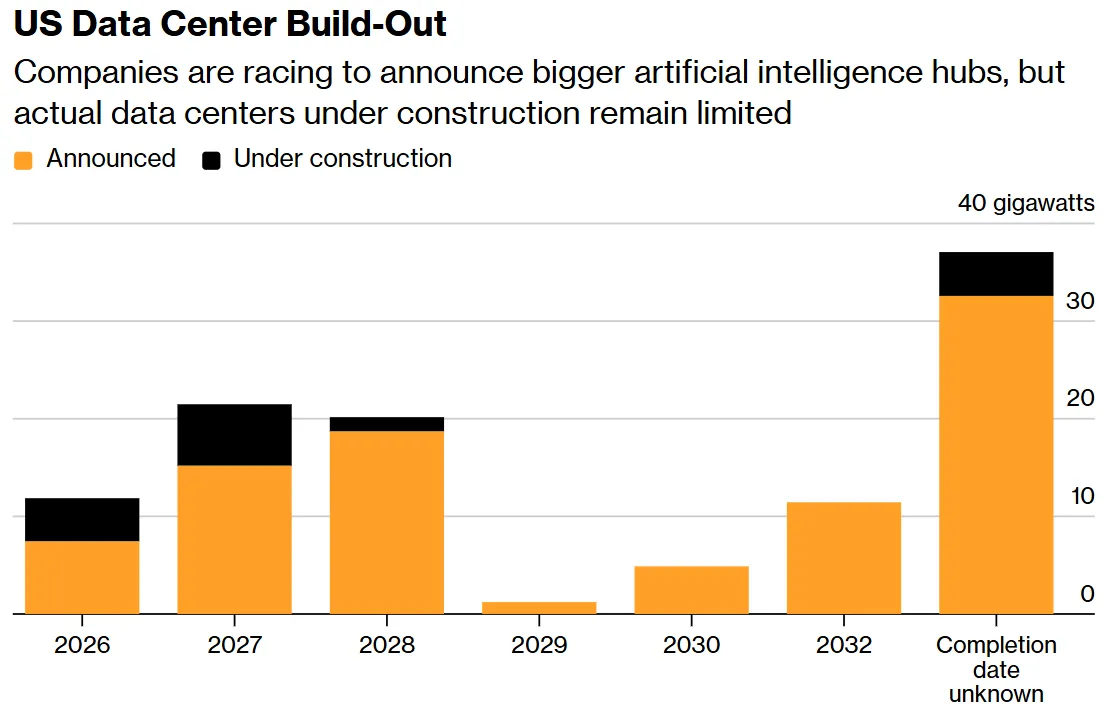

Construction of more than half of US data centres is on hold.

The artificial-intelligence industry has hit a barrier that money or new generations of chips cannot clear. A global grid crunch, component shortages and community resistance have turned the construction of data centres into one of tech’s hardest logistical and political problems.

How AI data centres differ from classical facilities, why the industry has grown more dependent on China, and how residents are voting out local officials to shield themselves from noise and environmental risks — all in ForkLog’s new report.

An architectural shift

Traditional data centres that powered the internet economy over the past two decades are fundamentally different from the architecture required for large language models.

The classic data centre is built around the CPU and draws on average 5–10 kW per rack, whereas GPU-first AI racks consume roughly ten times more. Machine-learning racks with accelerators such as Nvidia’s H100 or B200 require 40–120 kW each. The power-density gap changes the underlying physics of these sites.

A cluster of tens of thousands of GPUs under peak load consumes as much electricity as a small industrial city. The snag is that distribution networks and substations are rarely designed for such abrupt, localised surges in demand.

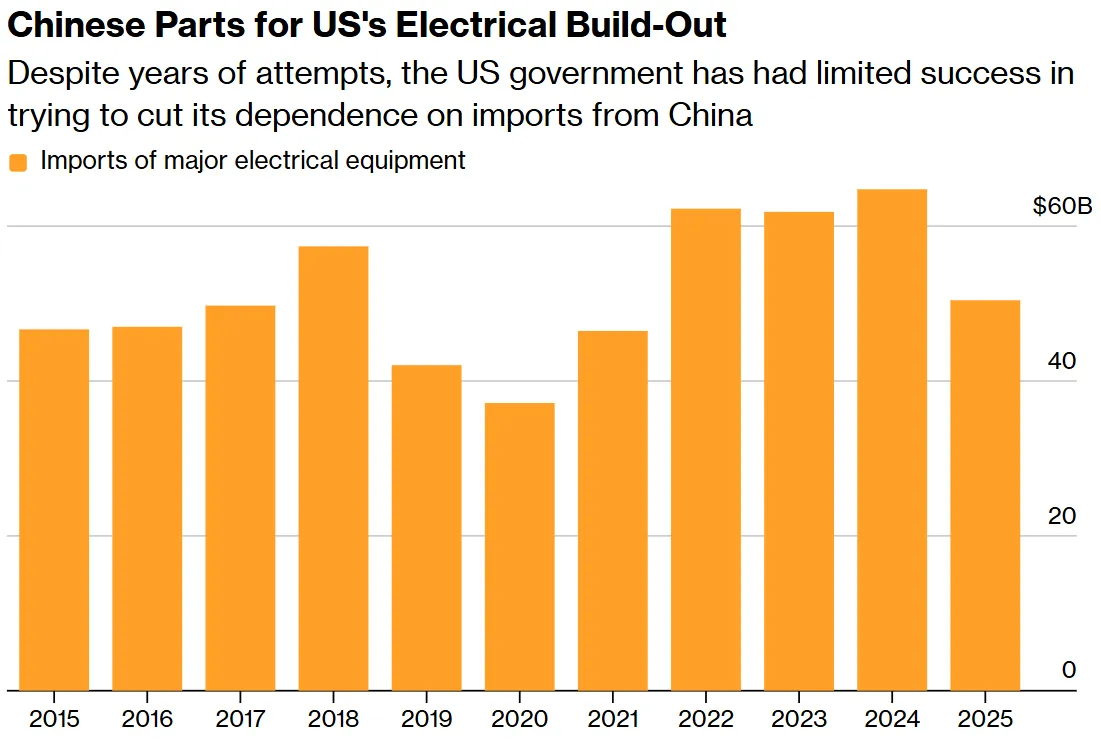

The appetites of AI’s leaders have drained stocks of critical power-delivery gear: high‑voltage transformers, generators and batteries for uninterruptible power supplies. Manufacturing capacity in the US and Europe cannot keep up. As a result, lead times for industrial transformers, mostly sourced from China, have stretched from one–two years to three–five.

Another challenge is heat rejection. Air cooling cannot cope with AI-server density. The industry is being pushed toward liquid systems such as Direct-to-Chip and immersion baths. They require millions of litres of treated water and threaten regions, especially in arid climates.

According to data cited by Dutch researcher Alex de Vries-Gao, AI systems worldwide used about 765bn litres of water in 2025. To conserve resources, developers are refining designs. Instead of traditional cooling towers, where water evaporates into the atmosphere, new data centres are increasingly equipped with closed‑loop systems. In them, water circulates through pipes, absorbs heat, is cooled in radiators and returns to servers with virtually no loss in volume. Adoption, however, is lagging far behind the pace of new builds.

Almost at the Stargate

An unprecedented budget, top-level political backing and the mantle of the decade’s defining AI alliance. At launch, Stargate seemed to have it all — at least on paper.

Stargate is a $500bn initiative that US president Donald Trump announced in January 2025 as part of a national push to retain technological dominance. The OpenAI–SoftBank–Oracle joint venture was meant to drive a rapid build‑out of AI data‑centre infrastructure.

A year on from the White House fanfare, the JV still lacked a full team and had not signed a single major construction deal in its own name.

Markets turned skittish, too. JPMorgan Chase, slated to arrange $38bn of Stargate debt, ran into investor doubts about the project’s returns.

OpenAI’s Sam Altman and SoftBank founder Masayoshi Son disagreed on fundamentals: where to build and who would be in control. From September to October 2025, Stargate’s top brass shuttled to Tokyo for tough talks with Son but failed to settle who would own the platform for the flagship campus in Abilene, Texas.

OpenAI weighed going it alone, but lenders refused to provide funds without a clear path to profitability.

Stargate scaled back its aims and vacated a 900‑MW pad, keeping core Texas capacity with an eye to 1.2 GW later on. Partners shifted focus: in April 2026, developer Related Digital and Oracle raised $16bn in debt and equity to build a new mega‑data‑centre in Michigan for OpenAI’s needs.

Rivals seized the opening. In late March 2026 Microsoft snapped up the vacant 900 MW, becoming Crusoe Energy’s new partner to expand the Abilene campus. The upgrade should lift the site’s total capacity to 2.1 GW by mid‑2027, using Nvidia GPUs.

People push back

Data centres are no longer viewed as unambiguous economic engines — they create few jobs once built, yet strain grids, consume water and generate constant noise.

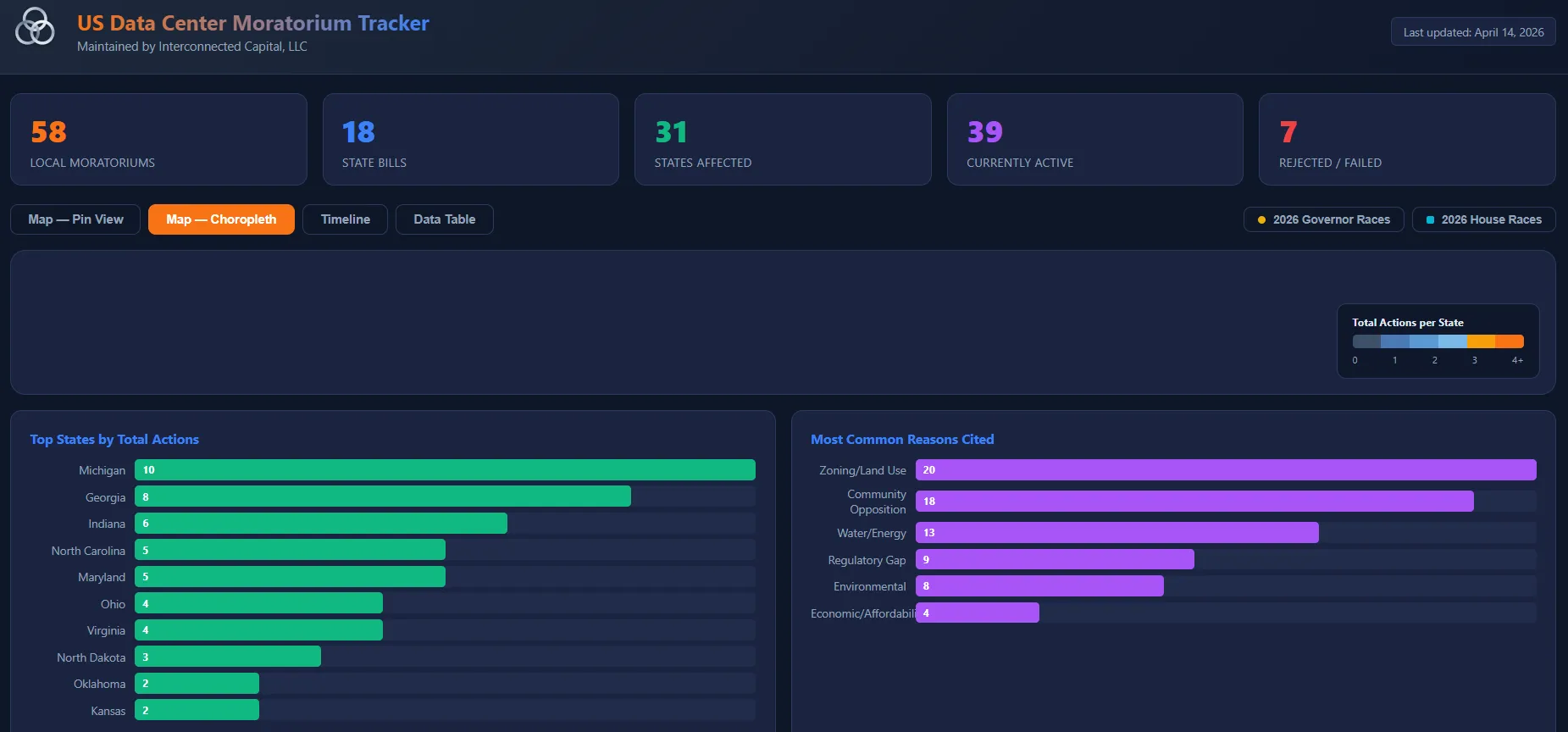

In April 2026 residents of Festus, Missouri, protested a $6bn data‑centre proposal. Locals secured the removal of four of eight city‑council members and launched a petition to oust the rest, including the mayor.

On April 9 residents sued the city, alleging it failed to give the public enough time to review the proposal before voting and made illegal zoning changes for the project. The suit also claims the city held private meetings about the plan instead of public ones.

The approved scheme for an unnamed developer would cover 360 acres.

Similar episodes have unfolded across America in recent months:

- in February 2026 the New Brunswick (New Jersey) city council, under public pressure, rejected a data‑centre deal and opted instead to use 27,000 square feet for a public park;

- that same month, a proposal to annex land into Foristell (Missouri) met resistance over fears it would host a data centre. The decision was revised and the area kept its agricultural zoning;

- in September 2025 Prince George’s County (Maryland) paused data‑centre projects after local protests and set up a task force to study risks;

- in St. Charles (Missouri), less than an hour from Festus, officials are moving to ban data centres permanently after imposing a moratorium in August 2025.

A data center in New Brunswick was canceled tonight when hundreds of residents showed up. When fight big tech and private equity we win. pic.twitter.com/doZ63Pdwue

— Ben Dziobek (@BenDziobek) February 19, 2026

The growing backlash has increased demand for transparency and real‑time data. The team behind the “US Data‑Center Moratorium Tracker” is trying to unmask companies behind unnamed deal participants and is mapping all jurisdictions that have formally imposed temporary bans on new builds.

According to the dashboard, as of April 14, 2026, there are 58 moratoria in force in the US.

Fine, then: to space

Energy shortages, delayed components and public protests have stalled the sector.

Per Bloomberg, construction of about half of all planned US data centres has been postponed indefinitely or scrapped. Less than a third of projected capacity is actively being built.

Tech giants and independents are searching for alternatives — from the seabed to orbit.

Between 2014 and 2024 Microsoft explored submerging sealed server capsules. The last large test of Project Natick took place off Scotland’s Orkney Islands from 2018 to 2020. A capsule housing two racks with 864 servers was lowered to about 35 metres.

Only six compute units failed over two years underwater. By contrast, the on‑land control group saw eight times more failures. Researchers attributed this to inert nitrogen inside the capsule, the lack of temperature swings and the absence of the human factor — a frequent cause of breakdowns.

The ocean provided a free, effectively limitless heat sink and, contrary to fears, the data centre “did not harm the ecosystem”. An artificial reef even formed around the capsule, attracting fish.

Despite the success, the project was shelved as ill‑suited to AI and hampered by logistics. Any physical intervention required ships, lifting the multi‑tonne capsule from the seabed and then resealing it.

How might space fare? In late 2025, researchers at the 33FG group estimated that by 2030 AI compute in orbit would be cheaper than on Earth.

In February SpaceX filed a request with America’s Federal Communications Commission to launch a constellation of 1m satellites for data centres — a network of orbital facilities linked by laser backhaul.

The logic of space data centres rests on two factors: access to round‑the‑clock solar power and low temperatures for near‑ideal passive cooling.

But the concept faces tough commercial and physical constraints. SpaceX’s leadership has warned of the risk that such projects are not viable at this stage.

Main hurdles:

- launch costs. Even as the price per kilogram falls, sending heavy racks with tungsten radiation shielding remains extremely expensive;

- latency. Real‑time inference needs milliseconds. Shipping massive datasets to and from orbit adds delays that make the setup unsuitable for many tasks. Such facilities may fit only asynchronous model training;

- servicing. You cannot swap a failed GPU in space. Equipment lifetimes are strictly bounded by radiation tolerance.

Others are joining the orbital push: Google said it aims to build a low‑Earth‑orbit satellite system to harvest solar power for data centres, while Nvidia has announced a compute platform for space‑based facilities.

In 2026 California startup Aetherflux plans to launch orbital solar mini‑farms in satellite form to beam energy down to Earth with lasers.

On April 27, 2026, Meta agreed to procure 1 GW of space‑derived power for its data centres from another startup. According to orbital power‑station developer Overview Energy, the first in‑orbit demo is due in 2028, with commercial deliveries in 2030.

The build‑out of AI infrastructure has run into physical and administrative limits. The voracious power draw of new GPU clusters, their need for cooling water and the strain on local grids have changed how residents and municipalities view data centres. Scaling terrestrial compute has become not a matter of capital alone but a knotty logistical and social challenge.

Orbital data‑centre ventures, despite today’s high costs and maintenance barriers, are emerging as a pragmatic response to the ground‑level crunch. In the coming years, firms’ ability to solve the placement problem will determine the pace of progress in computing.

Рассылки ForkLog: держите руку на пульсе биткоин-индустрии!