April Sets Record for Crypto Industry HacksApril saw a record number of crypto hacks, with over 20 incidents reported.

April witnessed a record number of hacks in the crypto industry. Analysts at DeFiLlama counted more than 20 incidents during the month.

Arrests of Roblox account thieves near Lviv, a hack of a Chinese task scheduler for mining, and other cybersecurity developmentsThis week’s key cybersecurity developments.

A round-up of the week’s most important cybersecurity news.

CoinGecko: Quarterly Trading Volume of Tokenized Gold Reaches $90.7 BillionTokenized gold trading volume hit $90.7 billion in Q1, exceeding 2025's total.

In the first quarter, the spot trading volume of tokenized gold reached $90.7 billion, surpassing the total for 2025 ($84.64 billion), according to CoinGecko.

Tether Reports $1.04 Billion Profit for the QuarterTether reports $1.04 billion profit for Q1 2026, audited by BDO.

Tether has released its financial report for the first quarter of 2026, audited by BDO. The net profit of the stablecoin issuer amounted to $1.04 billion.

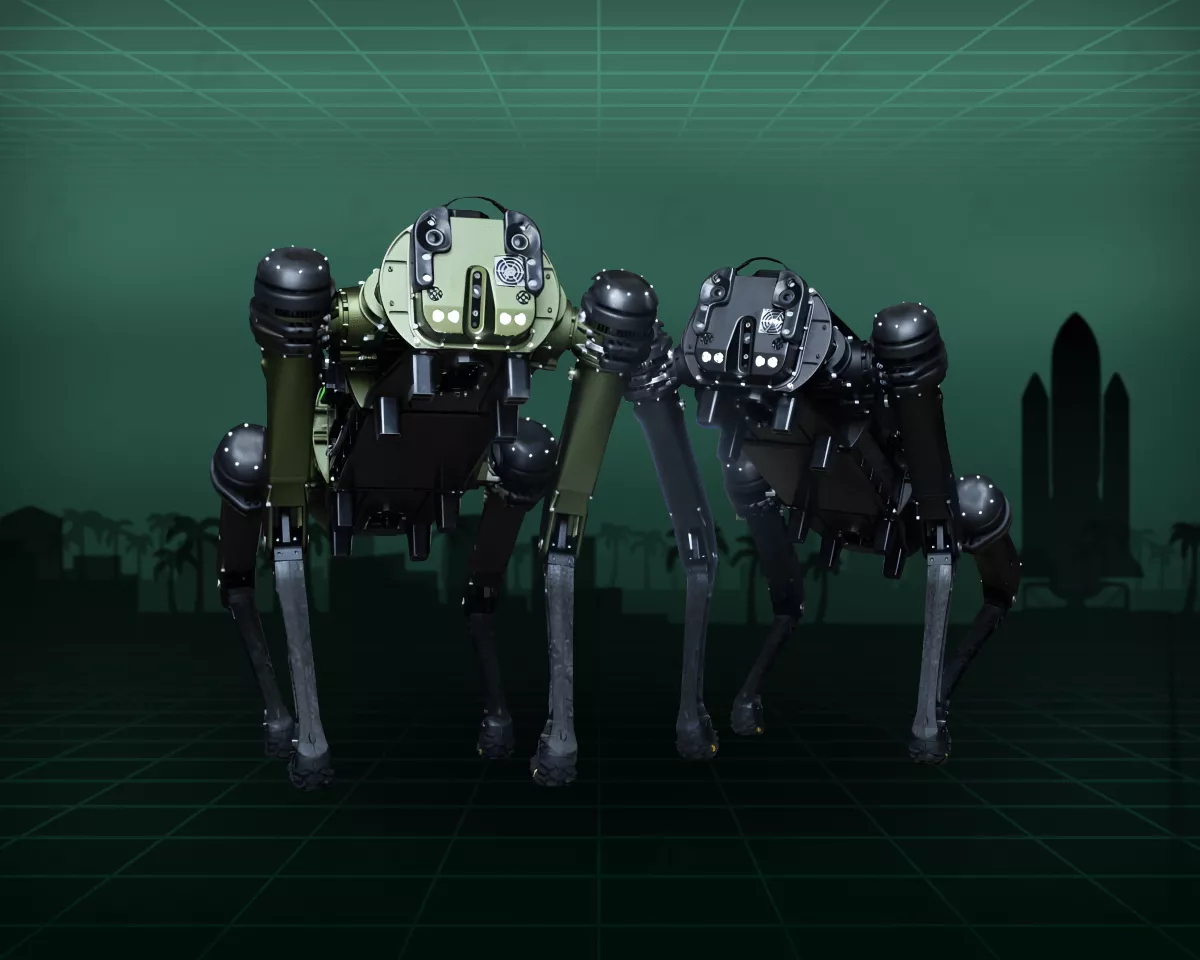

Pentagon Secures AI Contracts with Nvidia, Microsoft, and AWS Following Anthropic DisputePentagon secures AI contracts with Nvidia, Microsoft, and AWS after Anthropic dispute.

The Pentagon has entered into agreements with Nvidia, Microsoft, Reflection, and Amazon Web Services (AWS) to deploy advanced AI tools in classified military environments.

When intelligence isn’t enoughConstruction of more than half of US data centres is on hold.

Power and chips were only the beginning; the human factor is what the AI industry was not ready for.

The Wisdom of WhalesHow prediction markets became corporate barbershops

Two recent incidents that expose outcome manipulation on Polymarket.

$2.15 Instead of 2–3% of Turnover: How goodPayments Crypto Processing Works

goodPayments is a non-custodial crypto payment processor for accepting USDT and TRX on the TRON network. The service forwards funds directly to the merchant's wallet and charges a flat fee of 6.5/13 TRX ($2.15/$4.30) with no percentage of turnover. Here's how the product works — and at what turnover the flat-rate model pays off.

Analysts Warn of Potential Cascade Liquidations in Ethereum PositionsAnalysts from CryptoQuant and Arkham have observed unusual metrics in Ethereum derivatives.

Analysts from CryptoQuant and Arkham have observed unusual metrics in derivatives of the second-largest cryptocurrency by market capitalization.

Humanoid Robots to Handle Luggage and Cleaning at Tokyo AirportJapan Airlines and GMO AI & Robotics test humanoid robots at Haneda Airport.

Japan Airlines, in collaboration with GMO AI & Robotics, has initiated trials of humanoid robots for ground operations at Tokyo's Haneda Airport.

What Is Tokenized Gold and Why Are Billions Being Invested in It?Tokenised gold explained: how it works, its benefits, risks and the market’s leading projects.

An explainer on tokenised gold: how it works, its pros and pitfalls, and why investors are piling in.

The funding rate: how it helps anticipate price reversals in bitcoin and EthereumHow funding rates flag reversals in bitcoin and Ethereum before price moves.

Learn how the futures-balancing mechanism flags market capitulation or euphoria before the price moves.

AI Bot Based on Claude Outperforms S&P 500 by Mimicking Creator’s StyleAI bot based on Claude outperforms S&P 500 by mimicking creator's style.

Software engineer Jake Nesler developed a trading AI agent using Claude from Anthropic. The bot mimics the creator's trading style.

CryptoQuant Labels April Bitcoin Surge as SpeculativeApril's Bitcoin rally driven by futures, spot demand negative, says CryptoQuant.

April's Bitcoin rally from $66,000 to $79,000 was driven by perpetual futures, while spot demand remained negative, CryptoQuant noted.

Arbitrum DAO to Vote on $71 Million Transfer to DeFi United FundArbitrum DAO to vote on $71 million transfer to DeFi United fund.

The governance of the L2 network Arbitrum has initiated a vote on transferring 30,766 ETH (~$71 million) to the DeFi United fund, established to aid the sector's recovery following the Kelp hack.

PayPal Restructures Business to Establish Cryptocurrency DivisionPayPal announced a business reorganization focusing on digital assets.

Payment company PayPal announced a business reorganization. The management structure will be divided into three areas, one of which will focus on digital assets.

Glassnode Observes Easing Bitcoin Seller PressureBitcoin prices are caught between support at $65,000-$70,000 and resistance at $79,000.

Bitcoin prices are caught between the support zone of $65,000-$70,000 and resistance at $79,000. Analysts at Glassnode have noted a record number of short positions and a high likelihood of a short squeeze.

Layer 2 Project MegaETH Launches MEGA TokenMegaETH launched the MEGA token, trading at $0.2 on major exchanges.

On April 30, the Layer 2 network MegaETH launched the MEGA token. The asset began trading on leading exchanges at approximately $0.2.

Tether Proposes Bitcoin Conglomerate with XXI, Strike, and ElektronTether proposes merging three firms to form a leading bitcoin entity.

Tether's investment division has proposed merging three companies to create a leading public entity in the bitcoin industry.

Hacker Extracts Over $5 Million from Wasabi ProtocolWasabi project hacked on April 30th, with losses exceeding $5 million.

On April 30th, the Wasabi project was hacked. According to PeckShield experts, the damage exceeded $5 million.

Russian Government Approves Tax Amendments for Cryptocurrencies and Digital Financial AssetsThe bill on DFA and cryptocurrency taxation heads to the State Duma in early May, says Alexey Yakovlev.

The bill on the taxation of DFAs and cryptocurrencies will be submitted to the State Duma in early May, announced Alexey Yakovlev, Director of the Financial Policy Department of the Ministry of Finance.

We use cookies to improve the quality of our service.

By using this website, you agree to the Privacy policy.